Late last century, biochemists thought: if we can identify all genes and their functions, we will understand how humans, animals, and other organisms work. This proved a pipe dream. Genes are only the blueprint for proteins, and the proteins are the actual workhorses in our cells. To really understand biological processes, it is not enough to study the blueprint alone. Ruedi Aebersold, professor emeritus at the ETH Zurich in Switzerland, and Matthias Mann, professor at the Max Planck Institute for Biochemistry in Martinsried, realised that you also have to research the complex interplay between all proteins, and between proteins and other molecules. In doing so, they pioneered a new field that studies the collection of all these proteins: proteomics.

‘Initially, people thought there was a clear pathway from a specific gene to a specific function,’ Aebersold explains. ‘A piece of DNA forms the code for a specific protein, and that protein performs a specific function in the cell, or so the thinking was. We now know that biology is much more complex.’ The main reason: one protein typically does not perform one specific function but interacts with all kinds of different proteins or other molecules to perform different functions. ‘You can compare it to our society,’ says Aebersold. ‘In society, people have different roles as well. For example, someone is a biochemist, a father, and the coach of a football team. In all these roles, he works with different people in different contexts, performing all kinds of different functions together. We want to identify the properties of all 5 to 10 billion protein molecules in a cell. On the one hand, we want to know the characteristics of proteins: how heavy are they, what is their structure? And on the other hand, we want to know how they interact with each other in the context of a cell and what functions they perform with these interactions.’

Aebersold and Mann were instrumental in developing the techniques to make this possible. The first step is to identify which proteins are present in a cell. Proteins are very long chains of 20 different basic building blocks: the amino acids. These chains are folded together in an intricate way. The identity of each protein is defined by the sequence of its amino acids.

Peptide puzzle

One of the main techniques you can use to identify a protein is mass spectrometry. You could see a mass spectrometer as fancy scales. But ‘weighing’ proteins as a whole does not offer enough information about their identity. This way, you cannot determine the sequence of the hundreds to thousands of amino acids that make up the protein. Therefore, biochemists first cut up proteins into smaller pieces called peptides and determine the masses of those. They then chop up such peptides into even smaller pieces: random chains of a few amino acids each. They also determine the masses of these chains. Using all this information, they can figure out the amino acid sequence of a peptide. If they identify several peptides from the same protein, they know which protein they are dealing with.

Mann developed the first algorithm to solve this complicated puzzle. ‘It is very difficult to determine from all those different masses of protein fragments exactly how the protein is put together,’ he says. ‘One morning, it suddenly occurred to me how we could do this. I programmed the idea, and it turned out it worked.’ As peptides are chopped into random fragments, groups of fragments are also created that differ by only one amino acid each. Mann’s algorithm searches for such groups and uses this information to reconstruct pieces of the amino acid sequence. It then searches a database to see which known peptide contains all these sequences, and also has the correct total mass. ‘Looking back, this has been the most important moment of my career,’ says Mann. ‘Thanks to this algorithm, a lot of important proteins were later discovered.’

Electrical charge

Mass spectrometers work completely differently from kitchen scales. A crucial step of mass spectrometry is that you give the peptides an electrical charge. Then you can accelerate them with an electric field towards a detector, and the length of time they take on this journey indicates their mass. Lighter peptides will move faster towards the detector than heavier fragments, and so you can accurately determine the mass of each peptide.

During his PhD, Mann worked on this crucial part: ensuring that the peptides receive an electrical charge. ‘My supervisor, John Fenn, was working on a cool technique to turn a solution of molecules into a spray with electrically charged molecules. At the time, nobody thought this would work, but I immediately thought it would be very exciting if it did. Then you could use this process, for example, to analyse proteins. I had a background in physics myself, and in my mind, these were extremely complex and interesting systems. We worked on this technique, and eventually succeeded in making it suitable for analysing proteins. In 2002, Fenn received the Nobel Prize in chemistry for this work.’

One drawback was that you needed large amounts of a particular protein, many more than there are in a cell, for example. Mann therefore later adapted the technique in his own lab to study very small amounts of proteins, so that mass spectrometry could actually be used to study the proteins of living systems.

Isotopes

‘Initially, the aim of proteomics was to create overviews of all the different proteins present in a sample, for example in an extract of a particular cell type,’ says Aebersold. ‘But for biologists it is even more interesting to find differences between different cell types or cell states, for example between healthy and diseased cells. You want to be able to compare how much of a particular protein is present in these different cells.’ To achieve quantitative comparisons, it is often insufficient to pass the two samples through the mass spectrometer one after the other. Due to the many complex processing steps in a mass spectrometer, sometimes a higher proportion of peptides makes it to the detector than at other times. This is not a problem if you only want to chart which proteins are present, but it is a problem if you want to know exactly how much of each protein there is.

To compare samples quantitatively, mass spectrometrists have used stable isotopes. These are variants of the same chemical element that have a slightly different mass. Aebersold developed a method to attach labels with light or heavy isotopes to the protein fragments. This makes the protein fragments from one sample just slightly heavier than the equivalent protein fragments from the other sample. You can then mix both samples and send them together through the mass spectrometer. You now measure a slightly lighter and a slightly heavier version of each peptide. Because they went through the mass spectrometer at the same time, you can compare very precisely the abundances of these different variants. This way you can ultimately determine whether, for example, a sick person has more of a certain protein in their cells than a healthy person. Mann developed a variant of this method. In this process, you place proteins in a nutrient medium and make sure they replace their amino acids with variants made of light or heavy isotopes.

‘With these methods, we can study proteins in blood, for example,’ says Mann. ‘These proteins are in contact with your organs. If, for example, you are developing liver disease, the amounts of protein in your blood may change. If we can spot that early, you can change your lifestyle and thus prevent getting sick.’ Mann and his colleagues are also studying whether they can detect the onset of miscarriages in the blood of pregnant women, in order to be able to intervene early here too. ‘The great thing is that you can use our techniques wherever proteins are involved. And proteins are actually involved in everything.’

‘With isotope labelling, it became possible to quantitatively compare more than two samples,’ says Aebersold. In the first decade of this century, the capabilities and performance of mass spectrometers increased rapidly, but a conceptual problem remained: the mass spectrometer always detected only a fraction of all peptides present. ‘There are about 12,000 different proteins in a human cell, and back then a mass spectrometer managed to detect a sufficient number of peptides to identify about 1,000 to 2,000 of them,’ says Aebersold. ‘That was a gigantic achievement, especially considering that during my PhD, it took me six months to determine the amino acid sequence of a single protein. But if you want to compare a lot of samples, you face a problem: the mass spectrometer detects a random proportion of peptides, and these are therefore not always peptides from the same 2,000 proteins. That makes it very difficult to compare the quantities of certain proteins.’

Targeted measurement

To solve this problem, Aebersold developed a technique called ‘targeted proteomics’. In targeted proteomics, you decide in advance which proteins you want to include in your study and set the mass spectrometer to specifically measure only these proteins. ‘This way, you could very accurately measure the amounts of predetermined sets of proteins’, says Aebersold. ‘This proved to be a very powerful method, because it allowed us to study very specifically groups of proteins that we knew or suspected to play a role in a particular disease. We could compare the quantities of those proteins very consistently across hundreds of samples, allowing us to compare hundreds of patients. However, the method accommodated a lot fewer different proteins than you could measure with the non-targeted methods.

Later, Aebersold and his colleagues developed a technique that did allow very large numbers of different proteins to be compared with great consistency across large numbers of samples. The big change was that before, the mass spectrometer chopped up the peptides one by one and therefore determined their identity sequentially. Aebersold’s method allowed groups of peptides to be chopped up and analysed in parallel. As you can imagine, that process creates a gigantic mess of data. ‘With conventional methods, you would be unable to do anything with the data that came out of this type of measurement,’ says Aebersold. ‘You then know the masses of fragments of about a hundred different peptides, but you do not know which fragments belong together. To resolve this issue, we developed algorithms that look for patterns belonging to specific peptides in the data. That way, we can determine the identity of a lot of peptides that are concurrently fragmented.’

‘Initially, I was a bit sceptical about this method,’ says Mann. ‘That was related to the fact that Aebersold and his colleagues initially used this method in combination with mass spectrometers that were not very good. At the time, we developed a mass spectrometer together with a manufacturer that turned out to be quite suitable for this very technique. We joined forces and that had a big impact. Today, almost everyone in the research area uses this method. It was nice that after working in parallel for a long time, work in our two groups finally came together.’

Interaction network

To really understand how proteins build and control our bodies, you also need to map their interactions. Protein researchers use a kind of baiting technique for this purpose. In this process, you attach one of the proteins from a cell to a surface. Then you bring all the other proteins from the cell in contact with it. The proteins that normally interact with the bait protein in our body will now bind to it. Then you send the group of bound proteins through the mass spectrometer, and you can see exactly which proteins interact with the bait protein. You can repeat this with different bait proteins each time, mapping the network of all interactions.

Mann is currently studying interactions between proteins of viruses in this way. ‘We have already done this for corona virus proteins and want to start doing it with a few other research groups for all the viruses we know that could infect humans. There are about 10,000 of those. We hope that will teach us a lot about how these viruses invade and affect human cells, and how we can intervene in this.’

Aebersold has also done a lot of research on interactions between proteins, including in cancer cells. ‘We studied cancer cells from cell lines that have been used in laboratories for research for years,’ he says. ‘We found that these cells evolved new properties over time and studied the molecular basis of these. For example, we observed that some of these cells developed resistance to infection with Salmonella, a bacterium that normally invades these cells. Comparing the protein interaction network between Salmonella invasion resistant and non-resistant cells, we could identify the molecular machinery that normally allows a Salmonella bacterium to enter the cell.’ Aebersold thinks this is also the direction patient research will take. ‘It starts with a patient in whom a certain change has occurred, for instance one or several genetic mutations causing a disease or resistance to a drug. We now have the techniques to trace the molecular changes induced by these genetic variants all the way to the interactions between proteins.’ This information can then be used to base a treatment on.

Pretty pictures

Not only has mass spectrometry taken off in biochemical research over the past decades, but imaging techniques are also rapidly improving. This enables three-dimensional imaging of tissues and cells in ever-higher resolution. ‘Many people think a mass spectrum is boring,’ laughs Mann. ‘Researchers working with these imaging techniques can create very pretty pictures. But you could also say: those are ‘just’ pretty pictures. For example, they can image cancer cells that look different from normal ones, but they do not know what exactly these cells are doing.’

Mann and his colleagues are therefore trying to bring these two fields together. ‘For example, we do research on melanoma. With all the fancy imaging techniques, we can first image all the cells in and around the melanoma. We can then cut a number of different cells from the tissue, from outside the cancerous region to inside it, and analyse the proteins in them. This allows us to follow the process from normal cell to cancer cell in the same patient. For example, we can see that a particular signalling pathway – a series of proteins that activate each other – is malfunctioning. Then we can administer a drug that we know acts on precisely that signalling pathway. So, you can create a specific treatment for each patient. I hope we can actually implement this in hospitals in about five years’ time.’

Another important medical application of proteomics is Mann’s research into allergic skin reactions to drugs. ‘Fortunately, this is rare, but some people develop symptoms similar to severe burning,’ says Mann. ‘A third of these patients even die from this. It was unknown what caused this reaction, nor was there any proper treatment.’ Mann and his colleagues examined affected skin cells from these patients, and they saw that a specific signalling pathway in the immune cells was very much activated. ‘There already was a drug for this signalling pathway, which was used for something else. Recently, a research group in China used this drug to treat eight patients with this allergic reaction, and they all made a full recovery. Once the drug is authorised in Europe for this disease, dermatologists will start using it here too. This is one of the things I am most proud of: I started out as a fundamental physicist, and now, in the best case, we can help save human lives.’

Aebersold is also proud of all the medical applications that proteomics is generating, but he also stresses the importance for our fundamental knowledge. ‘We are now slowly but surely starting to unravel the complexity of living systems,’ says Aebersold. ‘It is fascinating how evolution has produced the enormous complexity of living systems. We now have the tools to decipher and try to understand this, not just in humans, but in the vast diversity of life there is on Earth, most of it still largely unexplored.’

CV

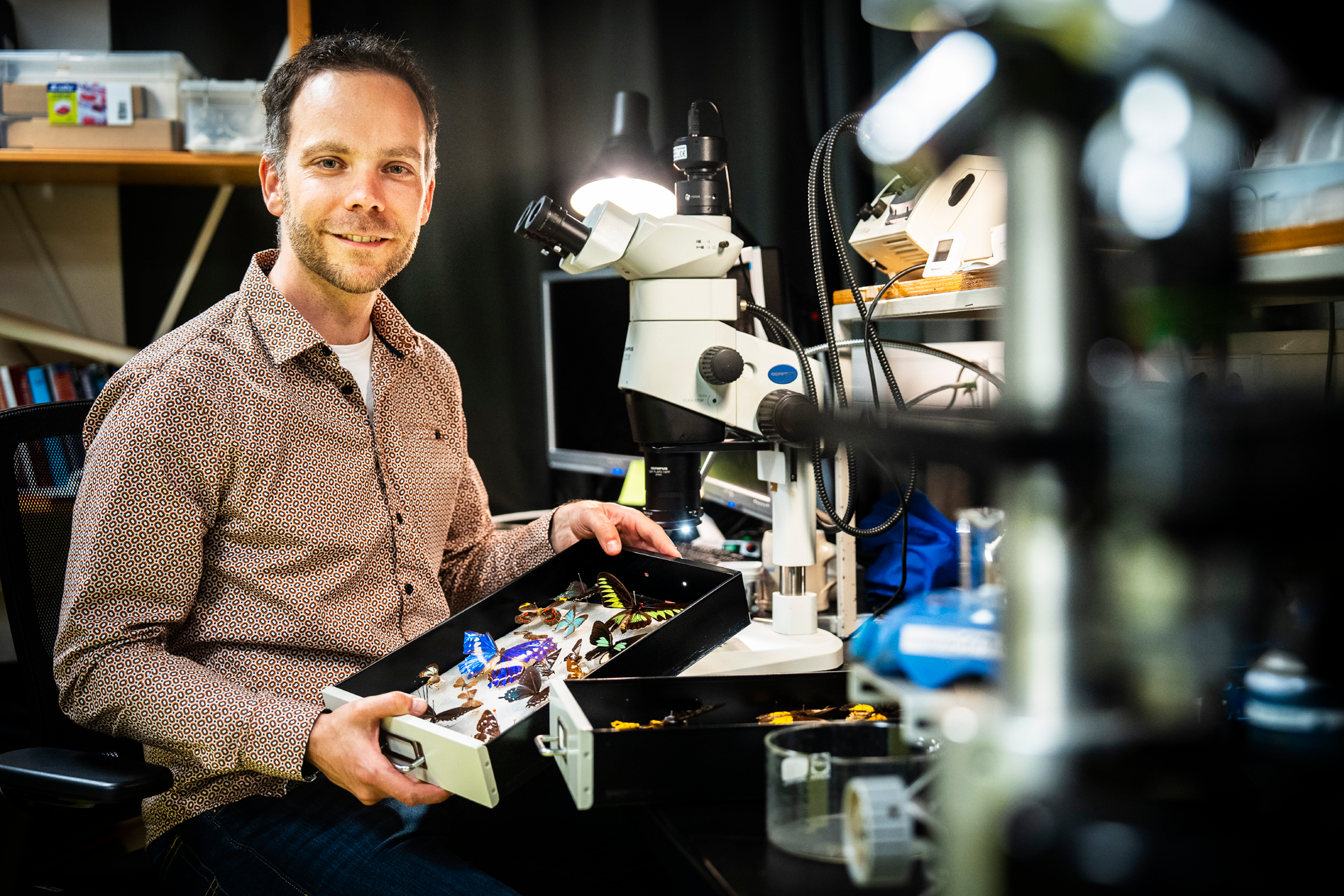

Ruedi Aebersold (Oberdiessbach, Switzerland, 1954) studied cellular biology at the University of Basel in Switzerland. He received his PhD there in 1983, also in cellular biology. After postdoctoral training at the California Institute of Technology, he was appointed assistant professor at the University of British Colombia in Vancouver in 1989. In 1993, he left for Seattle, where he was appointed professor of molecular biotechnology at the University of Washington in 1998. In 2000, he co-founded the world’s first Institute for Systems Biology in Seattle. In 2004, he moved back to Switzerland and became professor of systems biology at the ETH Zurich. He reached emeritus status in 2020.

CV

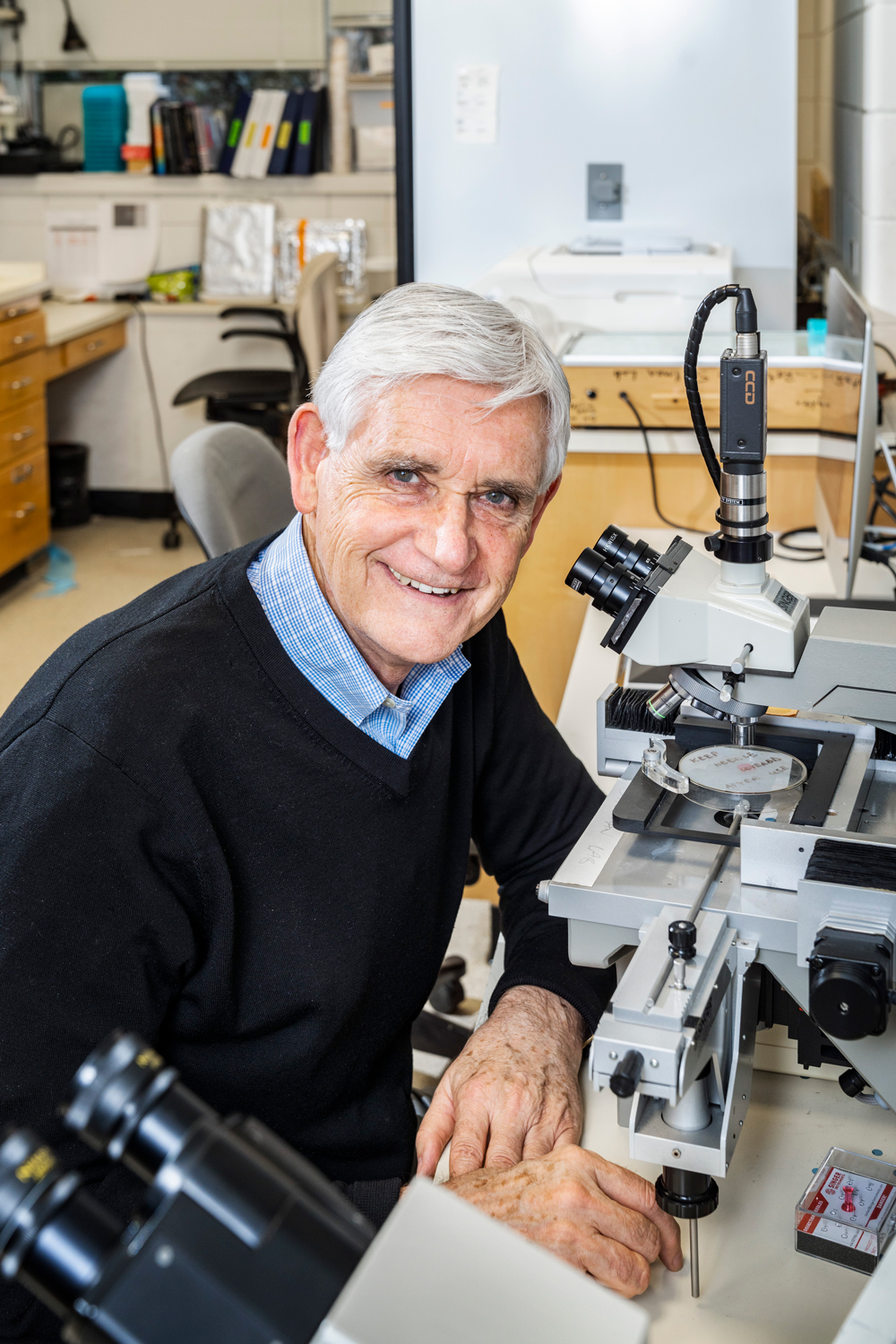

Matthias Mann (Thuine, Germany, 1959) studied physics and mathematics at the Georg August University in Göttingen. He received his PhD in chemical engineering from Yale University in the US in 1988. After a postdoctoral position at the University of Southern Denmark, he became group leader at the European Molecular Biology Laboratory in Heidelberg in 1992. In 1998, he was appointed professor of bioinformatics at the University of Southern Denmark, and he has been director of the Max-Planck Institute for Biochemistry in Martinsried since 2005. Since 2007, he has simultaneously been director of the proteomics programme at the University of Copenhagen.

Heineken Prizes

In 1964, Alfred Heineken established the Dr H.P. Heineken Prize for Biochemistry and Biophysics, as a tribute to his father, Henry P. Heineken, who was himself a biochemist. In addition, the award was intended to highlight the importance of science to the brewing industry. The Heineken Prizes have since grown into internationally leading awards for top scientists.